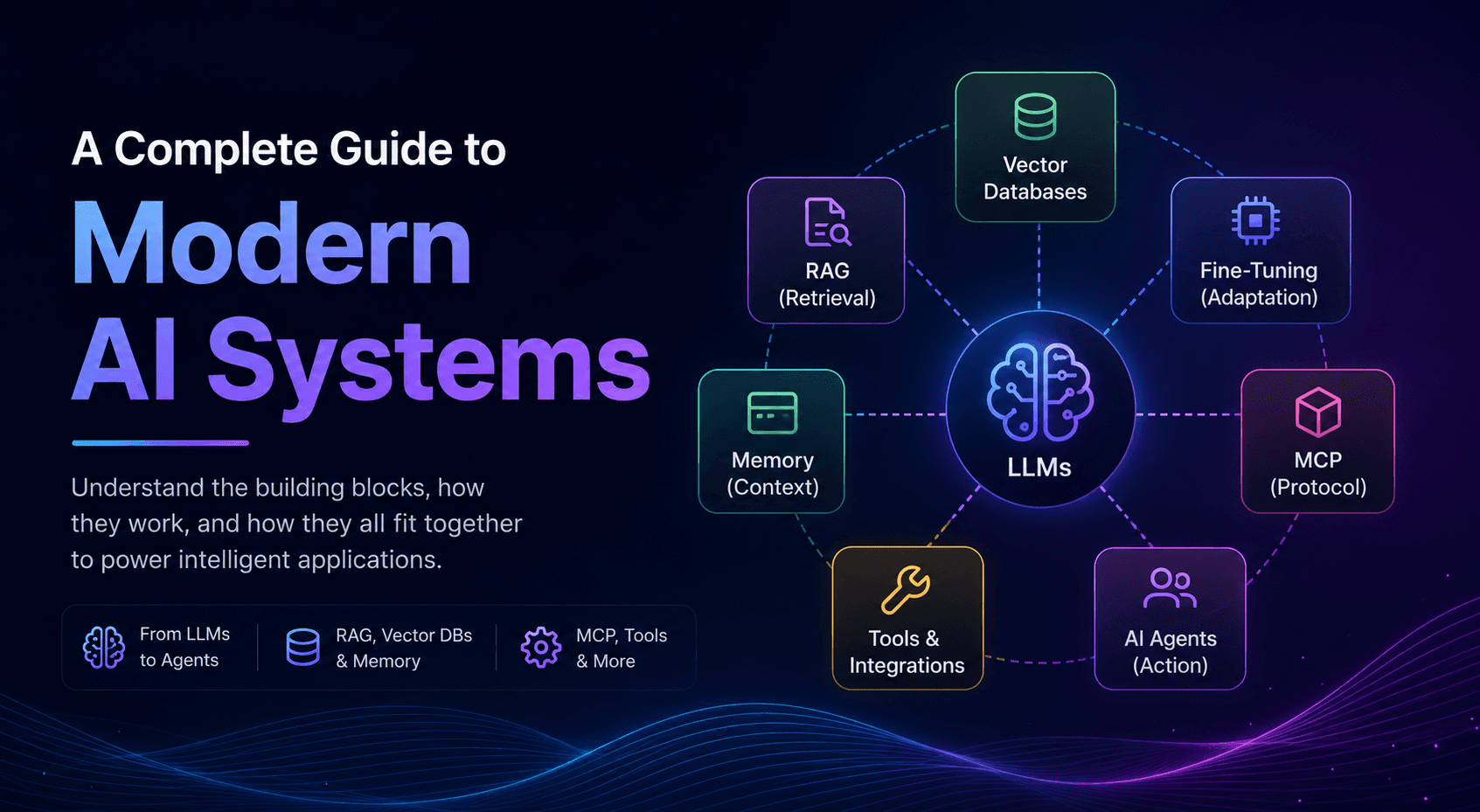

If You Want to Become an AI Engineer, Learn These Concepts First

Modern AI Systems

I'm a keen learner with a good problem solving skills, interested in software engineering(SDE) and web development, looking for new learning opportunities in-order to apply my knowledge & skills to solve real world problems.

Introduction

Artificial Intelligence is evolving faster than ever. Just a few years ago, building AI applications meant training machine learning models from scratch and deploying them with complex infrastructure. Today, developers can build powerful AI systems using Large Language Models (LLMs), retrieval pipelines, vector databases, memory systems, and autonomous agents - all working together as a modern AI stack.

But there’s a problem.

Most aspiring AI engineers jump directly into prompting tools like ChatGPT or Claude without understanding the core concepts that power modern AI applications. As a result, they can use AI tools, but struggle to design reliable, scalable, and production-ready AI systems.

This blog is designed to bridge that gap.

In this guide, we’ll explore the foundational concepts every modern AI engineer should understand - including LLMs, RAG (Retrieval-Augmented Generation), MCP, fine-tuning, vector databases, memory systems, AI agents, and agentic workflows.

Rather than treating these as isolated buzzwords, we’ll understand how they connect together to form real-world AI architectures used in products like ChatGPT, Cursor, Perplexity, Claude, and modern AI copilots.

By the end of this blog, you’ll have:

A strong mental model of the modern AI stack

Clarity on how different AI components interact

Practical understanding of real-world AI architectures

A roadmap for building production-grade AI applications

Whether you’re an aspiring AI engineer, developer, student, or builder exploring the future of AI systems, this guide will help you understand the technologies shaping the next generation of software.

Understanding Large Language Models (LLMs)

Large Language Models (LLMs) are the foundation of modern AI applications. Tools like OpenAI ChatGPT, Anthropic Claude, and Google Gemini are all powered by LLMs trained on massive amounts of text data.

At their core, LLMs are prediction engines.

They learn patterns from enormous datasets and generate text by predicting the most probable next token in a sequence. A token can be a word, subword, punctuation mark, or even part of a sentence depending on the tokenizer being used.

For example, given the sentence:

“Artificial Intelligence is transforming…”

the model predicts what token is most likely to come next based on everything it learned during training.

The Transformer Architecture

Modern LLMs are primarily built on the Transformer architecture introduced in the 2017 paper:

Attention Is All You Need

The Transformer fundamentally changed AI by making it possible for models to understand relationships between words and context much more effectively than previous architectures like RNNs or LSTMs.

The key innovation behind Transformers is self-attention.

Self-attention allows the model to determine which words in a sentence are important relative to each other.

For example, in the sentence:

“The animal didn’t cross the street because it was too tired.”

the model understands that “it” refers to “the animal” rather than “the street.”

Tokens, Context Windows, and Parameters

To understand how LLMs work in practice, three concepts are extremely important:

Tokens

LLMs do not process raw sentences directly. They process tokens.

For example:

“ChatGPT is amazing” may become multiple tokens

Longer prompts consume more tokens

Input + output tokens contribute to cost and latency

This is why token optimization matters in production AI systems.

Context Window

The context window defines how much information the model can “remember” during a conversation or task.

A larger context window allows the model to:

Analyze larger documents

Maintain longer conversations

Work with more instructions simultaneously

However, larger context windows also increase compute cost and latency.

Parameters

Parameters are the learned weights inside the model.

In general:

More parameters → greater capability

But also → higher infrastructure requirements

Modern frontier models contain billions or even trillions of parameters trained across massive GPU clusters.

Training vs Inference

LLMs operate in two major phases:

Pretraining

During pretraining, the model learns language patterns from massive datasets collected from books, websites, codebases, research papers, and other sources.

This phase is extremely compute-intensive and can cost millions of dollars.

Inference

Inference happens when users interact with the model after training is complete.

Every time you send a prompt to ChatGPT or Claude, the model performs inference to generate a response.

Most AI engineers today focus more on inference applications rather than training foundational models from scratch.

Why LLMs Changed Software Forever

Traditional software behaves deterministically:

- Same input → same output

LLMs behave probabilistically:

Same input can generate different outputs

Responses are context-dependent

Systems become more flexible and adaptive

Instead of hardcoding every rule manually, developers can now build systems where language itself becomes the interface.

That is why modern AI engineering is less about building isolated models and more about orchestrating intelligent systems around LLMs.

And this is where concepts like RAG, vector databases, memory systems, and AI agents become critically important.

Retrieval-Augmented Generation (RAG)

One of the biggest limitations of Large Language Models is that they do not truly “know” real-time or private information.

An LLM only knows what it learned during training. This creates several problems:

Knowledge becomes outdated (Knowledge Cut-off)

Models hallucinate facts

Private company data cannot be accessed directly

Responses may lack domain-specific accuracy

This is where Retrieval-Augmented Generation (RAG) becomes extremely important.

RAG is an architecture pattern that enhances LLMs by retrieving relevant external information before generating a response.

Instead of relying only on what the model memorized during training, the system dynamically fetches relevant context from external sources such as:

PDFs

Documentation

Databases

Notion pages

Internal company knowledge bases

APIs

Websites

The retrieved information is then injected into the prompt so the LLM can generate more accurate and grounded responses.

How RAG Works

A typical RAG pipeline consists of three major stages:

1. Indexing Knowledge

Before retrieval can happen, documents must first be processed and stored.

This usually involves:

Splitting documents into chunks

Generating embeddings for each chunk

Storing embeddings inside a vector database

For example:

A 200-page PDF might be split into hundreds of smaller chunks

Each chunk is converted into a numerical representation (embedding)

These embeddings allow semantic search instead of keyword-only matching

2. Retrieval

When a user sends a query:

The query itself is converted into an embedding

The system searches for semantically similar chunks

Relevant information is retrieved from the vector database

Unlike traditional search systems, semantic retrieval understands meaning rather than exact keywords.

For example:

“How do I deploy this application?”

“How can I host this project?”

Both queries may retrieve similar documents even though the wording is different.

3. Generation

The retrieved context is appended to the user’s prompt before sending it to the LLM.

The model now generates responses grounded in external information rather than relying purely on memorized knowledge.

This dramatically improves:

Accuracy

Freshness

Reliability

Personalization

Sparse Retrieval vs Dense Retrieval

There are two major retrieval approaches commonly used in RAG systems.

Sparse Retrieval

Traditional methods like BM25 rely on keyword matching.

Advantages:

Fast

Interpretable

Good for exact terms

Disadvantages:

Weak semantic understanding

Struggles with paraphrased queries

Dense Retrieval

Dense retrieval uses embeddings generated by transformer models.

Advantages:

Understands semantic meaning

Better contextual matching

More flexible retrieval

Disadvantages:

Higher infrastructure cost

Requires vector databases

Most modern AI applications use dense retrieval or hybrid approaches combining both methods.

Challenges in Building Good RAG Systems

Although RAG sounds simple conceptually, building high-quality RAG systems is surprisingly difficult.

Some common engineering challenges include:

Chunking Strategy

Chunks that are too small lose context.

Chunks that are too large reduce retrieval precision.

Retrieval Quality

Poor retrieval leads to poor generation.

Even the best LLM cannot answer correctly if irrelevant context is retrieved.

Latency

RAG pipelines introduce additional retrieval steps before generation.

This increases response time.

Context Window Limits

Too much retrieved context can overwhelm the model and reduce answer quality.

Data Freshness

Knowledge bases must be updated continuously to keep information relevant.

What is a Vector Database?

A vector database is a system designed to store and retrieve embeddings efficiently.

Instead of performing exact-match queries like traditional SQL databases, vector databases perform similarity search.

This allows AI systems to retrieve semantically relevant information extremely quickly.

Popular vector databases include:

Pinecone Pinecone

FAISS FAISS

Chroma Chroma

Milvus Milvus

These systems are commonly used in:

RAG pipelines

AI search engines

Recommendation systems

AI assistants

Memory systems for agents

Why Embeddings Matter

Embeddings are one of the most important building blocks in modern AI engineering because they allow machines to understand semantic relationships between data.

They power capabilities such as:

Semantic search

Document retrieval

Recommendation engines

Context-aware AI systems

Personalized AI experiences

Without embeddings, most modern RAG and AI memory systems would not work effectively.

Fine-Tuning and Model Adaptation

Although modern LLMs are incredibly capable out of the box, they are still general-purpose models.

In real-world applications, organizations often need models to:

Understand domain-specific knowledge

Follow custom response styles

Perform specialized tasks

Adapt to internal workflows

This is where fine-tuning becomes important.

Fine-tuning is the process of adapting a pretrained model using additional training data for a specific use case.

Instead of training a model from scratch, developers build on top of an already capable foundation model.

Full Fine-Tuning vs Efficient Adaptation

Training all model parameters is extremely expensive.

Because of this, modern AI systems often use more efficient adaptation techniques instead of full retraining.

Popular approaches include:

LoRA (Low-Rank Adaptation)

Adapters

Prompt tuning

Instruction tuning

These methods reduce compute cost while still enabling strong customization.

Fine-Tuning vs RAG

One of the most common misconceptions in AI engineering is confusing RAG with fine-tuning.

They solve different problems.

RAG is used for:

Injecting external knowledge

Accessing real-time information

Retrieving private documents

Improving factual grounding

Fine-tuning is used for:

Changing model behavior

Improving formatting/style

Specializing tasks

Adapting workflows

A good rule of thumb:

Use RAG for knowledge.

Use fine-tuning for behavior.

Many production AI systems combine both approaches together.

Challenges of Fine-Tuning

Fine-tuning is powerful, but it also introduces challenges.

1. Data Quality

Poor training data leads to poor model behavior.

2. Overfitting

Models may become too specialized and lose general capability.

3. Cost

Training and hosting customized models can become expensive.

4. Evaluation

Testing AI systems reliably is still a major engineering challenge.

This is why many teams first optimize prompting and RAG pipelines before moving to fine-tuning.

Memory in AI Systems

One of the biggest limitations of traditional LLM interactions is that conversations are often stateless.

The model may respond intelligently within a session, but once the context window is gone, the information disappears.

This creates an important challenge:

How can AI systems remember useful information over time?

This is where memory systems become essential in modern AI applications.

Short-Term vs Long-Term Memory

AI memory is commonly divided into two categories.

Short-Term Memory

Short-term memory exists inside the model’s context window.

This includes:

Current conversation history

Instructions

Retrieved documents

Temporary context

The limitation is that context windows are finite.

Once the limit is exceeded, older information is removed.

Long-Term Memory

Long-term memory persists information outside the model itself.

This memory is usually stored using:

Vector databases

Structured databases

Knowledge graphs

External storage systems

The system can later retrieve this information when needed.

Examples include:

Remembering user preferences

Past conversations

Personalized recommendations

Workflow history

Project context

How AI Memory Works in Practice

Most memory systems follow a simple pattern:

Store important interactions externally

Convert them into embeddings

Retrieve relevant memories during future interactions

Inject them back into the prompt

This allows AI systems to behave more consistently and contextually over time.

In many ways, memory systems are an extension of RAG architectures.

What is Agentic AI?

Agentic AI refers to AI systems capable of acting more autonomously toward goals rather than waiting for step-by-step human instructions.

Traditional AI:

- Responds to prompts

Agentic AI:

Plans

Decides

Iterates

Executes workflows

This is a major shift in how software is being designed.

MCP (Model Context Protocol)

\

As AI systems become more connected to external tools, one major challenge starts to appear:

How can LLMs securely and reliably interact with different applications, databases, and services?

Every tool traditionally required:

Custom integrations

Separate APIs

Manual authentication logic

Tool-specific implementations

This quickly becomes difficult to scale.

This is where the Model Context Protocol (MCP) becomes important.

MCP is an open protocol designed to standardize how AI models communicate with external tools, data sources, and applications.

Instead of building custom integrations for every system individually, MCP creates a common interface between AI systems and external resources.

You can think of MCP as:

“USB-C for AI applications.”

How MCP Works

At a high level, MCP introduces a standardized communication layer between:

AI models

Tools

External applications

An MCP-compatible system can expose:

Tools

Resources

Context

Actions

to an AI model in a structured way.

Instead of hardcoding integrations directly into the application, tools become dynamically discoverable and reusable.

For example, an AI assistant could:

Access files from a filesystem

Query a database

Use GitHub tools

Interact with cloud infrastructure

Read documentation

all through a standardized protocol.

MCP and AI Agents

MCP becomes especially powerful when combined with AI agents.

Agents require access to:

Context

Memory

External tools

Execution environments

MCP provides a cleaner and more scalable way to manage these interactions.

Rather than building isolated agent architectures for every application, developers can build reusable ecosystems of tools that AI systems can access dynamically.

This is one of the reasons MCP is gaining significant attention in the AI ecosystem.

A Simple Mental Model of Modern AI Systems

A modern AI application often looks something like this:

LLM → reasoning engine

RAG → retrieves external knowledge

Vector Database → stores semantic embeddings

Memory System → maintains context over time

Fine-Tuning → adapts behavior for specific tasks

MCP / Tools → connects external systems and APIs

AI Agent Layer → orchestrates actions and workflows

Instead of relying on a single model, modern AI systems combine multiple layers working together.

This shift is transforming AI from:

- Simple chat interfaces

into:

- Autonomous, context-aware software systems